Amazon Redshift Spectrum supports many common data formats: text, Parquet, ORC, JSON, Avro, and more. You can query data in its original format or convert data to a more efficient one based on data access pattern, storage requirement, and so on. For example, if you often access a subset of columns, a columnar format such as Parquet and ORC can greatly reduce I/O by reading only the needed columns. How to convert from one file format to another is beyond the scope of this post. Spectrum-friendly things are, Group By clauses, comparison conditions, and pattern matching conditions such as Like, and aggregate functions such as, Count, Sum, Average, Min and Max.The Amazon Redshift data lake export feature.For more information on how this can be done, see the following resources: Redshift spectrum vs athena how to# For example, avoid the SQL commands, Distinct and Order By. If they're part of a query the query planner will not include the data stored in S3. This prevents Spectrum nodes from doing unnecessary scans.Įven though Redshift Spectrum nodes are queried using standard SQL, there are some commands, filters, and aggregations that will not work on them. -1.png)

I think one of the easiest ways is to use a columnar file format. There are a couple of ways to optimize query performance. Also, regarding permissions, in order to use Redshift Spectrum, database users must have permission to create temporary tables in the database. It will have a similar effect of controlling user permissions.

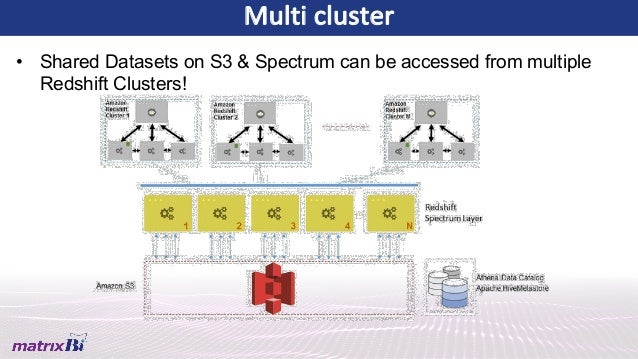

If access control is required, consider granting or revoking permissions on the external schema. The only way to control user permissions on an external table is using an AWS Glue Data Catalog that is enabled for AWS Lake Formation. Create a new external table and insert only the values needed into it. After all, they are objects stored in S3. It is impossible to perform UPDATE or DELETE operations on external tables. The other is that endpoints are not free. One is that when using a VPC interface endpoint, communication between a VPC and AWS Glue is sent through the AWS network. There are two things to be aware of here. Then, configure the VPC security groups to allow outbound traffic to the public endpoints for AWS Glue and Athena. To enable access to AWS Glue or Athena, configure a VPC with an Internet gateway, NAT gateway, or a VPC endpoint. Redshift Spectrum can access data catalogs in AWS Glue or Amazon Athena. One last consideration regarding Enhanced VPC Routing involves the data catalog. Log and audit Amazon S3 access using server access logging in AWS CloudTrail and Amazon S3. When Redshift Spectrum accesses data in Amazon S3, it performs these operations in the context of the AWS account and respective role privileges. Traffic originating from Redshift Spectrum to Amazon S3 doesn't pass through a VPC, so it is not logged in the VPC flow logs. One benefit of using Amazon Redshift Enhanced VPC Routing is that all copy and unload traffic is logged in VPC flow logs. Then, when attached to a cluster, the role can be used only in the context of Amazon Redshift and can't be shared outside of the cluster. Instead, use a bucket policy that restricts access only to specific principals, such as a specific AWS account or specific users.įor the IAM role that is granted access to the bucket, use a trust relationship that allows the role to be assumed only by the Amazon Redshift service principal. Redshift Spectrum cannot access data stored in Amazon S3 buckets that use a bucket policy that restricts access only to specified VPC endpoints. This is because Enhanced VPC Routing forces specific traffic to go through a VPC instead of Amazon's network, and Redshift Spectrum runs on AWS-managed resources.Īccess can be controlled to data in an Amazon S3 bucket by using a bucket policy attached to the bucket and using an IAM role attached to the cluster.

When a Redshift cluster is configured to use Enhanced VPC Routing it will impact how security is implemented. The Amazon Redshift cluster and the S3 bucket holding data must be in the same AWS region. I've mentioned a couple of them already, but they are important enough to be repeated. There are some things to remember when using Redshift Spectrum.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed